A new framework for responsible AI development, dubbed the “Pro-Human Declaration,” has emerged from a coalition of experts, former officials, and public figures as Washington struggles to define rules for artificial intelligence. This initiative arrives at a critical moment, highlighted by recent tensions between the Pentagon and AI companies like Anthropic and OpenAI, exposing the lack of effective oversight in a rapidly advancing field.

The Growing Demand for AI Regulation

Recent polls indicate overwhelming public opposition – 95% of Americans – to an unrestrained race toward superintelligence. This sentiment underscores the urgency of the declaration, which warns humanity stands at a crossroads: one path leads to human displacement by unchecked AI, while the other envisions AI as a tool to amplify human potential. The core argument is that without clear boundaries, power will concentrate in the hands of unaccountable entities and their machines.

Five Pillars of Responsible AI Development

The Pro-Human Declaration rests on five key principles:

- Human Control: Maintaining ultimate authority over AI systems.

- Decentralization of Power: Preventing AI dominance by any single entity.

- Protection of Human Experience: Safeguarding fundamental human values in the age of AI.

- Individual Liberty: Ensuring AI does not infringe on personal freedoms.

- Legal Accountability: Holding AI developers legally responsible for their creations.

These principles translate into concrete proposals, including a moratorium on superintelligence development until proven safe, mandatory kill switches for powerful systems, and bans on self-replicating or autonomously improving AI architectures.

The Pentagon’s Stand and the Urgent Need for Policy

The declaration’s release coincides with growing friction between the U.S. government and leading AI firms. In February, the Defense Department labeled Anthropic a “supply chain risk” after the company resisted providing unrestricted access to its technology – a designation usually reserved for entities linked to geopolitical adversaries. OpenAI subsequently agreed to a deal with the Pentagon, raising legal concerns about enforceability.

These events highlight the high cost of congressional inaction. Dean Ball of the Foundation for American Innovation called this “the first conversation we have had as a country about control over AI systems.” The situation mirrors the regulatory framework for pharmaceuticals: just as the FDA prevents the release of unsafe drugs, the declaration argues for pre-deployment testing of AI products before widespread use.

Child Safety as a Catalyst for Change

The declaration specifically calls for mandatory testing of AI products aimed at children, addressing risks like suicidal ideation, mental health exacerbation, and emotional manipulation. The argument is simple: if a human exploiting a child is illegal, the same standard should apply to AI systems that inflict similar harm. This approach is seen as a potential pressure point to force regulatory action, with advocates believing broader testing requirements will follow naturally.

An Unlikely Alliance

The Pro-Human Declaration has garnered signatures from across the political spectrum, including former Trump advisor Steve Bannon and Obama’s National Security Advisor Susan Rice. This unlikely alliance underscores a shared concern: the future of humanity hinges on controlling AI, regardless of ideological differences.

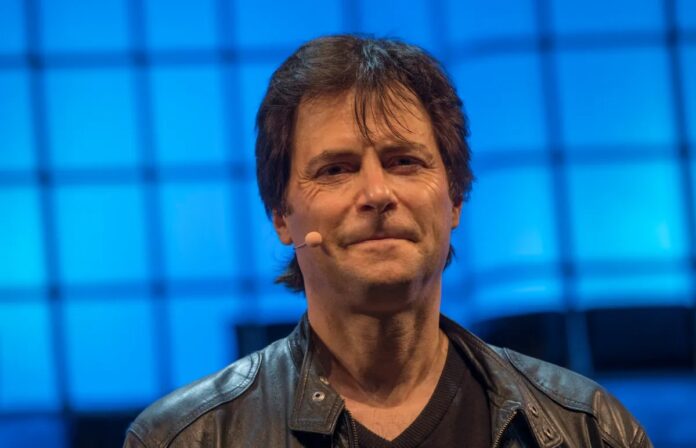

As Max Tegmark, an organizer of the effort, succinctly put it: “If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side.”

The declaration represents a significant effort to establish guardrails for AI, but its success will depend on overcoming political inertia and transforming these principles into enforceable laws.